|

Welcome to ShortScience.org! |

|

- ShortScience.org is a platform for post-publication discussion aiming to improve accessibility and reproducibility of research ideas.

- The website has 1584 public summaries, mostly in machine learning, written by the community and organized by paper, conference, and year.

- Reading summaries of papers is useful to obtain the perspective and insight of another reader, why they liked or disliked it, and their attempt to demystify complicated sections.

- Also, writing summaries is a good exercise to understand the content of a paper because you are forced to challenge your assumptions when explaining it.

- Finally, you can keep up to date with the flood of research by reading the latest summaries on our Twitter and Facebook pages.

Using Deep Learning for Segmentation and Counting within Microscopy Data

Hernández, Carlos X. and Sultan, Mohammad M. and Pande, Vijay S.

arXiv e-Print archive - 2018 via Local Bibsonomy

Keywords: dblp

Hernández, Carlos X. and Sultan, Mohammad M. and Pande, Vijay S.

arXiv e-Print archive - 2018 via Local Bibsonomy

Keywords: dblp

|

[link]

Given microscopy cell data, this work tries to determine the number of cells in the image. The whole pipeline is composed of two steps:

1. Cells segmentation:

- Feature Pyramid Network is used for generating a foreground mask;

- The last output of FPN is used for predicting mean foreground masks and aleatoric uncertainty masks. Each mask in both outputs is trained with aleatoric loss $ \frac{||y_{pred} - y_{gt} ||^2}{2\sigma} + \log{2\sigma}$ and [total-variational](https://en.wikipedia.org/wiki/Total_variation_denoising) loss. https://i.imgur.com/ssTuGVe.png

2. Cell counting:

- VGG-11 network is used as a feature extractor from the predicted foreground segmentation masks. There are two output branches following VGG: cell count branch and estimated variance branch. Training is done using L2 loss function with aleatoric uncertainty for cell counts.

https://i.imgur.com/aijZn7e.png

While the idea to utilize neural networks to count cells in the image seems fascinating, the real benefit of such system in production is quite questionable. Specifically, why would you need to add a VGG-like feature extractor on top of already predicted cell segmentation masks, if you could simply do more work in segmentation network (i.e. separate cells better, predict objectness/contour) and get the number of cells directly from the predicted masks?

|

MnasNet: Platform-Aware Neural Architecture Search for Mobile

Tan, Mingxing and Chen, Bo and Pang, Ruoming and Vasudevan, Vijay and Le, Quoc V.

arXiv e-Print archive - 2018 via Local Bibsonomy

Keywords: dblp

Tan, Mingxing and Chen, Bo and Pang, Ruoming and Vasudevan, Vijay and Le, Quoc V.

arXiv e-Print archive - 2018 via Local Bibsonomy

Keywords: dblp

|

[link]

When machine learning models need to run on personal devices, that implies a very particular set of constraints: models need to be fairly small and low-latency when run on a limited-compute device, without much loss in accuracy. A number of human-designed architectures have been engineered to try to solve for these constraints (depthwise convolutions, inverted residual bottlenecks), but this paper's goal is to use Neural Architecture Search (NAS) to explicitly optimize the architecture against latency and accuracy, to hopefully find a good trade-off curve between the two. This paper isn't the first time NAS has been applied on the problem of mobile-optimized networks, but a few choices are specific to this paper. 1. Instead of just optimizing against accuracy, or optimizing against accuracy with a sharp latency requirement, the authors here construct a weighted loss that includes both accuracy and latency, so that NAS can explore the space of different trade-off points, rather than only those below a sharp threshold. 2. They design a search space where individual sections or "blocks" of the network can be configured separately, with the hope being that this flexibility helps NAS trade off complexity more strongly in the early parts of the network, where, at a higher spatial resolution, it implies greater computation cost and latency, without necessary dropping that complexity later in the network, where it might be lower-cost. Blocks here are specified by the type of convolution op, kernel size, squeeze-and-excitation ratio, use of a skip op, output filter size, and the number of times an identical layer of this construction will be repeated to constitute a block. Mechanically, models are specified as discrete strings of tokens (a block is made up of tokens indicating its choices along these design axes, and a model is made up of multiple blocks). These are represented in a RL framework, where a RNN model sequentially selects tokens as "actions" until it gets to a full model specification . This is repeated multiple times to get a batch of models, which here functions analogously to a RL episode. These models are then each trained for only five epochs (it's desirable to use a full-scale model for accurate latency measures, but impractical to run its full course of training). After that point, accuracy is calculated, and latency determined by running the model on an actual Pixel phone CPU. These two measures are weighted together to get a reward, which is used to train the RNN model-selection model using PPO. https://i.imgur.com/dccjaqx.png Across a few benchmarks, the authors show that models found with MNasNet optimization are able to reach parts of the accuracy/latency trade-off curve that prior techniques had not.  |

Big Bird: Transformers for Longer Sequences

Zaheer, Manzil and Guruganesh, Guru and Dubey, Avinava and Ainslie, Joshua and Alberti, Chris and Ontañón, Santiago and Pham, Philip and Ravula, Anirudh and Wang, Qifan and Yang, Li and Ahmed, Amr

arXiv e-Print archive - 2020 via Local Bibsonomy

Keywords: transfer-learning, pre-trained, transformer, bert

Zaheer, Manzil and Guruganesh, Guru and Dubey, Avinava and Ainslie, Joshua and Alberti, Chris and Ontañón, Santiago and Pham, Philip and Ravula, Anirudh and Wang, Qifan and Yang, Li and Ahmed, Amr

arXiv e-Print archive - 2020 via Local Bibsonomy

Keywords: transfer-learning, pre-trained, transformer, bert

|

[link]

Transformers - powered by self-attention mechanisms - have been a paradigm shift in NLP, and are now the standard choice for training large language models. However, while transformers do have many benefits in terms of computational constraints - most saliently, that attention between tokens can be computed in parallel, rather than needing to be evaluated sequentially like in a RNN - a major downside is their memory (and, secondarily, computational) requirements. The baseline form of self-attention works by having every token attend to every other token, where "attend" here means that a query from each token A will take an inner product with each other token -A, and then be elementwise-multiplied with the values of every other token -A. This implies a O(N^2) memory and computation requirement, where N is your sequence length. So, the question this paper asks is: how do you get the benefits, or most of the benefits, of a full-attention network, while reducing the number of other tokens each token attends to. The authors' solution - Big Bird - has three components. First, they approach the problem of approximating the global graph as a graph theory problem, where each token attending to every other is "fully connected," and the goal is to try to sparsify the graph in a way that keeps shortest path between any two nodes low. They use the fact that in an Erdos-Renyi graph - where very edge is simply chosen to be on or off with some fixed probability - the shortest path is known to be logN. In the context of aggregating information about a sequence, a short path between nodes means that the number of iterations, or layers, that it will take for information about any given node A to be part of the "receptive field" (so to speak) of node B, will be correspondingly short. Based on this, they propose having the foundation of their sparsified attention mechanism be simply a random graph, where each node attends to each other with probability k/N, where k is a tunable hyperparameter representing how many nodes each other node attends to on average. To supplement, the authors further note that sequence tasks of interest - particularly language - are very local in their information structure, and, while it's important to understand the global context of the full sequence, tokens close to a given token are most likely to be useful in constructing a representation of it. Given this, they propose supplementing their random-graph attention with a block diagonal attention, where each token attends to w/2 tokens prior to and subsequent to itself. (Where, again, w is a tunable hyperparameter) However, the authors find that these components aren't enough, and so they add a final component: having some small set of tokens that attend to all tokens, and are attended to by all tokens. This allows them to theoretically prove that Big Bird can approximate full sequences, and is a universal Turing machine, both of which are true for full Transformers. I didn't follow the details of the proof, but, intuitively, my reading of this is that having a small number of these global tokens basically acts as a shortcut way for information to get between tokens in the sequence - if information is globally valuable, it can be "written" to one of these global aggregator nodes, and then all tokens will be able to "read" it from there. The authors do note that while their sparse model approximates the full transformer well in many settings, there are some problems - like needing to find the token in the sequence that a given token is farthest from in vector space - that a full attention mechanism could solve easily (since it directly calculates all pairwise comparisons) but that a sparse attention mechanism would require many layers to calculate. Empirically, Big Bird ETC (a version which adds on additional tokens for the global aggregators, rather than making existing tokens serve thhttps://i.imgur.com/ks86OgJ.pnge purpose) performs the best on a big language model training objective, has comparable performance to existing models on questionhttps://i.imgur.com/x0BdamC.png answering, and pretty dramatic performance improvements in document summarization. It makes sense for summarization to be a place where this model in particular shines, because it's explicitly designed to be able to integrate information from very large contexts (albeit in a randomly sampled way), where full-attention architectures must, for reasons of memory limitation, do some variant of a sliding window approach.  |

A Neural Network Approach to Context-Sensitive Generation of Conversational Responses

Sordoni, Alessandro and Galley, Michel and Auli, Michael and Brockett, Chris and Ji, Yangfeng and Mitchell, Margaret and Nie, Jian-Yun and Gao, Jianfeng and Dolan, Bill

arXiv e-Print archive - 2015 via Local Bibsonomy

Keywords: dblp

Sordoni, Alessandro and Galley, Michel and Auli, Michael and Brockett, Chris and Ji, Yangfeng and Mitchell, Margaret and Nie, Jian-Yun and Gao, Jianfeng and Dolan, Bill

arXiv e-Print archive - 2015 via Local Bibsonomy

Keywords: dblp

|

[link]

TLDR; The authors propose three neural models to generate a response (r) based on a context and message pair (c,m). The context is defined as a single message. The first model, RLMT, is a basic Recurrent Language Model that is fed the whole (c,m,r) triple. The second model, DCGM-1, encodes context and message into a BoW representation, put it through a feedforward neural network encoder, and then generates the response using an RNN decoder. The last model, DCGM-2, is similar but keeps the representations of context and message separate instead of encoding them into a single BoW vector. The authors train their models on 29M triple data set from Twitter and evaluate using BLEU, METEOR and human evaluator scores. #### Key Points: - 3 Models: RLMT, DCGM-1, DCGM-2 - Data: 29M triples from Twitter - Because (c,m) is very long on average the authors expect RLMT to perform poorly. - Vocabulary: 50k words, trained with NCE loss - Generates responses degrade with length after ~8 tokens #### Notes/Questions: - Limiting the context to a single message kind of defeats the purpose of this. No real conversations have only a single message as context, and who knows how well the approach works with a larger context? - Authors complain that dealing with long sequences is hard, but they don't even use an LSTM/GRU. Why?  |

Combining Markov Random Fields and Convolutional Neural Networks for Image Synthesis

Li, Chuan and Wand, Michael

Conference and Computer Vision and Pattern Recognition - 2016 via Local Bibsonomy

Keywords: dblp

Li, Chuan and Wand, Michael

Conference and Computer Vision and Pattern Recognition - 2016 via Local Bibsonomy

Keywords: dblp

|

[link]

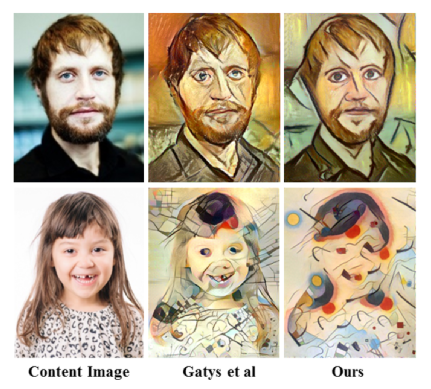

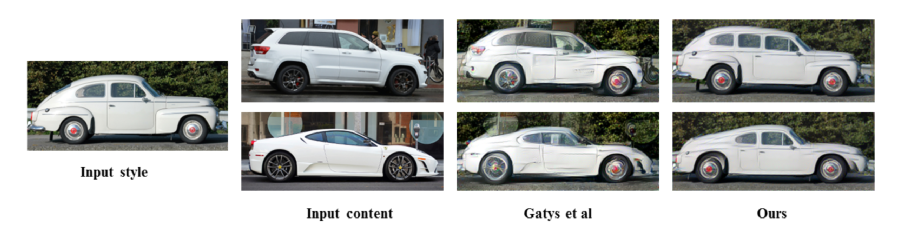

* They describe a method that applies the style of a source image to a target image.

* Example: Let a normal photo look like a van Gogh painting.

* Example: Let a normal car look more like a specific luxury car.

* Their method builds upon the well known artistic style paper and uses a new MRF prior.

* The prior leads to locally more plausible patterns (e.g. less artifacts).

### How

* They reuse the content loss from the artistic style paper.

* The content loss was calculated by feed the source and target image through a network (here: VGG19) and then estimating the squared error of the euclidean distance between one or more hidden layer activations.

* They use layer `relu4_2` for the distance measurement.

* They replace the original style loss with a MRF based style loss.

* Step 1: Extract from the source image `k x k` sized overlapping patches.

* Step 2: Perform step (1) analogously for the target image.

* Step 3: Feed the source image patches through a pretrained network (here: VGG19) and select the representations `r_s` from specific hidden layers (here: `relu3_1`, `relu4_1`).

* Step 4: Perform step (3) analogously for the target image. (Result: `r_t`)

* Step 5: For each patch of `r_s` find the best matching patch in `r_t` (based on normalized cross correlation).

* Step 6: Calculate the sum of squared errors (based on euclidean distances) of each patch in `r_s` and its best match (according to step 5).

* They add a regularizer loss.

* The loss encourages smooth transitions in the synthesized image (i.e. few edges, corners).

* It is based on the raw pixel values of the last synthesized image.

* For each pixel in the synthesized image, they calculate the squared x-gradient and the squared y-gradient and then add both.

* They use the sum of all those values as their loss (i.e. `regularizer loss = <sum over all pixels> x-gradient^2 + y-gradient^2`).

* Their whole optimization problem is then roughly `image = argmin_image MRF-style-loss + alpha1 * content-loss + alpha2 * regularizer-loss`.

* In practice, they start their synthesis with a low resolution image and then progressively increase the resolution (each time performing some iterations of optimization).

* In practice, they sample patches from the style image under several different rotations and scalings.

### Results

* In comparison to the original artistic style paper:

* Less artifacts.

* Their method tends to preserve style better, but content worse.

* Can handle photorealistic style transfer better, so long as the images are similar enough. If no good matches between patches can be found, their method performs worse.

*Non-photorealistic example images. Their method vs. the one from the original artistic style paper.*

*Photorealistic example images. Their method vs. the one from the original artistic style paper.*

|